Source Normalization for Language-Independent Speaker Recognition using i-Vectors

| Presented by: |

| ||

|---|---|---|---|

| Author(s): |

| ||

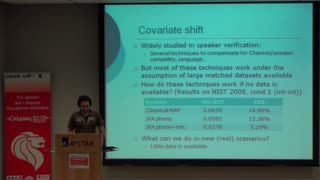

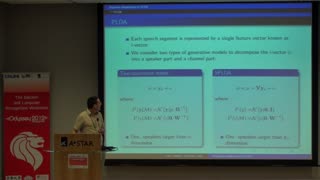

Source-normalization (SN) is an effective means of improving the robustness of i-vector-based speaker recognition for under-resourced and unseen cross-speech-source evaluation conditions. The technique of source-normalization estimates directions of undesired within-speaker variation more accurately than traditional methods when cross-source variation is not explicitly observed from each speaker in system development data. Source normalization can be incorporated into Within Class Covariance Normalization (WCCN) as an effective preprocessing step to Probabilistic Linear Discriminant Analysis (PLDA) based speaker recognition with i-vectors. This paper proposes to extend the application of source normalization to the reduction of language-dependence in PLDA speaker recognition by normalising for the variation that separates languages. Evaluated on the NIST 2008 and 2010 speaker recognition evaluation (SRE) data sets, the proposed Language Normalized WCCN (LN-WCCN) provides relative improvements of 26% in minimum DCF and 14% in EER under multilingual scenarios without detriment to common English-only conditions. LN-WCCN is also shown to significantly improve calibration performance when calibration parameters are learned from scores mismatched to evaluation conditions.