Pitch contour separation from overlapping speech

(longer introduction)

| Hiroki Mori (Utsunomiya University, Japan) |

|---|

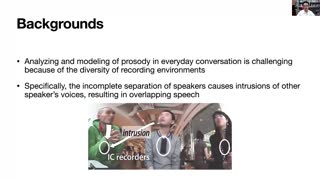

In everyday conversation, speakers’ utterances often overlap. For conversation corpora that are recorded in diverse environments, results of pitch extraction in the overlapping parts may be incorrect. The goal of this study is to establish the technique of separating each speaker’s pitch contour from an overlapping speech in conversation. The proposed method estimates statistically most plausible fo contour from the spectrogram of overlapping speech, along with the information of the speaker to extract. Visual inspection of the separation results showed that the proposed model was able to extract accurate fo contours from overlapping speeches of specified speakers. By applying this method, voicing decision errors and gross pitch errors were reduced by 63% compared to simple pitch extraction for overlapping speech.