Improving Polyphone Disambiguation for Mandarin Chinese by Combining Mix-pooling Strategy and Window-based Attention

(longer introduction)

| Junjie Li (Ping An Technology, China), Zhiyu Zhang (National Tsing Hua University, Taiwan), Minchuan Chen (Ping An Technology, China), Jun Ma (Ping An Technology, China), Shaojun Wang (Ping An Technology, China), Jing Xiao (Ping An Technology, China) |

|---|

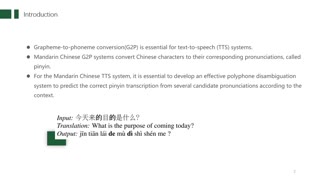

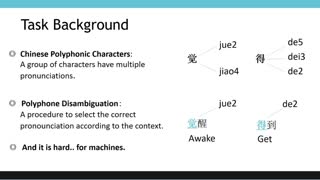

In this paper, we propose a novel system based on word-level features and window-based attention for polyphone disambiguation, which is a fundamental task for Grapheme-to-phoneme (G2P) conversion of Mandarin Chinese. The framework aims to combine a pre-trained language model with explicit word-level information in order to get meaningful context extraction. Particularly, we employ a pre-trained bidirectional encoder from Transformers (BERT) model to extract character-level features, and an external Chinese word segmentation (CWS) tool is used to obtain the word units. We adopt a mixed pooling mechanism to convert character-level features into word-level features based on the segmentation results. A window-based attention module is utilized to incorporate contextual word-level features for the polyphonic characters. Experimental results show that our method achieves an accuracy of 99.06% on an open benchmark dataset for Mandarin Chinese polyphone disambiguation, which outperforms the baseline systems.