Combining Hybrid and End-to-end Approaches for the OpenASR20 Challenge

(Oral presentation)

| Tanel Alumäe (Tallinn University of Technology, Estonia), Jiaming Kong (Tallinn University of Technology, Estonia) |

|---|

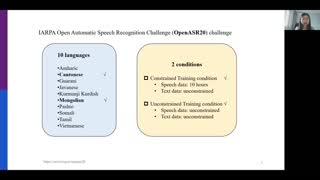

This paper describes the TalTech team submission to the OpenASR20 Challenge. OpenASR20 evaluated low-resource speech recognition technologies across 10 languages, using only 10 hours of training data in the constrained condition. Our ASR systems used hybrid CNN-TDNNF-based acoustic models, trained with different data augmentation strategies. We used language model adaptation, recurrent neural network language models and lattice combination for improving first pass results. The scores of our submissions were the best across all teams in six out of ten languages. The paper also describes post-evaluation experiments that focused on the unconstrained condition. We show that optimized N-best list combination of a CNN-TDNNF based system and a finetuned multilingual XLSR-53 model results in large reductions in word error rate. Using BABEL data and the combination of hybrid and end-to-end systems gives 12–22% relative improvement over the constrained condition results.