Attention-based cross-modal fusion for audio-visual voice activity detection in musical video streams

(3 minutes introduction)

| Yuanbo Hou (Ghent University, Belgium), Zhesong Yu (ByteDance, China), Xia Liang (ByteDance, China), Xingjian Du (ByteDance, China), Bilei Zhu (ByteDance, China), Zejun Ma (ByteDance, China), Dick Botteldooren (Ghent University, Belgium) |

|---|

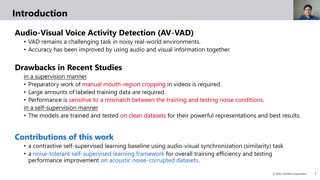

Many previous audio-visual voice-related works focus on speech, ignoring the singing voice in the growing number of musical video streams on the Internet. For processing diverse musical video data, voice activity detection is a necessary step. This paper attempts to detect the speech and singing voices of target performers in musical video streams using audio-visual information. To integrate information of audio and visual modalities, a multi-branch network is proposed to learn audio and image representations, and the representations are fused by attention based on semantic similarity to shape the acoustic representations through the probability of anchor vocalization. Experiments show the proposed audio-visual multi-branch network far outperforms the audio-only model in challenging acoustic environments, indicating the cross-modal information fusion based on semantic correlation is sensible and successful.